There are a lot of little things that I come across in my work that I can’t easily find the answers to and then when i do find the answer, I always wonder if there are other pple who could potentially benefit from this. There have been many instances where I have felt like this and I previously wrote a blog post about something very niche which was really just for me and my own reference. But when I checked the stats for my website, that simple ‘FYI’ blog post turned out to be my most visited page.

Today (August 3rd, 2021, the original date of this post), a similar thing happened: I found a small useful method for a very specific problem and I want to share it because it might save many people a whole lot of googling and searching through complicated documentation. I might update this post over and over for the next little bit or I might create a whole new post for another tip/trick. I don’t know. But here we go.

[1] Subsetting a HuggingFace Dataset

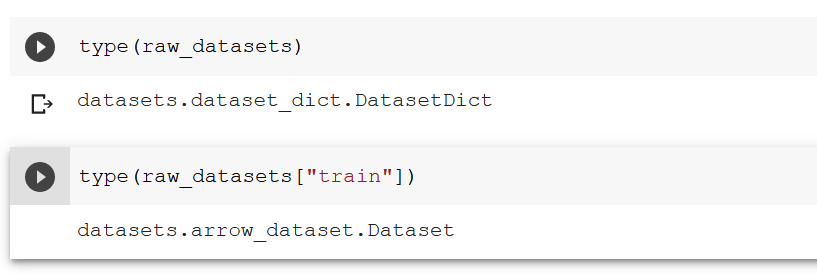

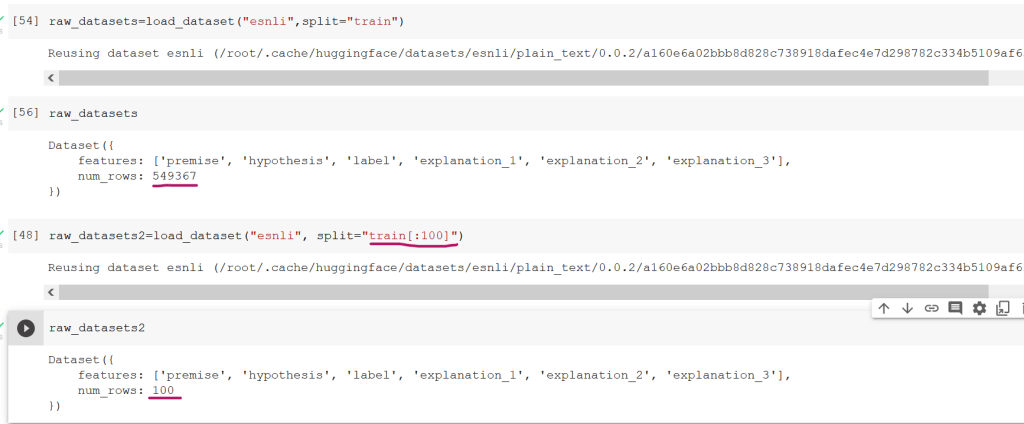

I’m assuming many experienced programmers and data scientists can think about their code logically in their heads and then proceed to write something that works. I can’t do that. I need to write the code, get stuck, and try random things over and over until the result looks like what i envisioned. Having said that, when working with data, especially a very large dataset, this kind of brute force method consumes a lot of time and computational resources. So I generally subset the data to include 100 rows and then play around with the code until i like what i see. I recently started working with the HuggingFace transformer models and using the information from their course, I wanted to fine-tune my own model. But the dataset I was using had over 550,000 rows and it was taking forever to train. So I just wanted to see how the training mechanism works when I run it on a smaller dataset.

But because the dataset structure is not the typical tabular type or dictionary type, I had no idea how to resolve the errors i was getting.

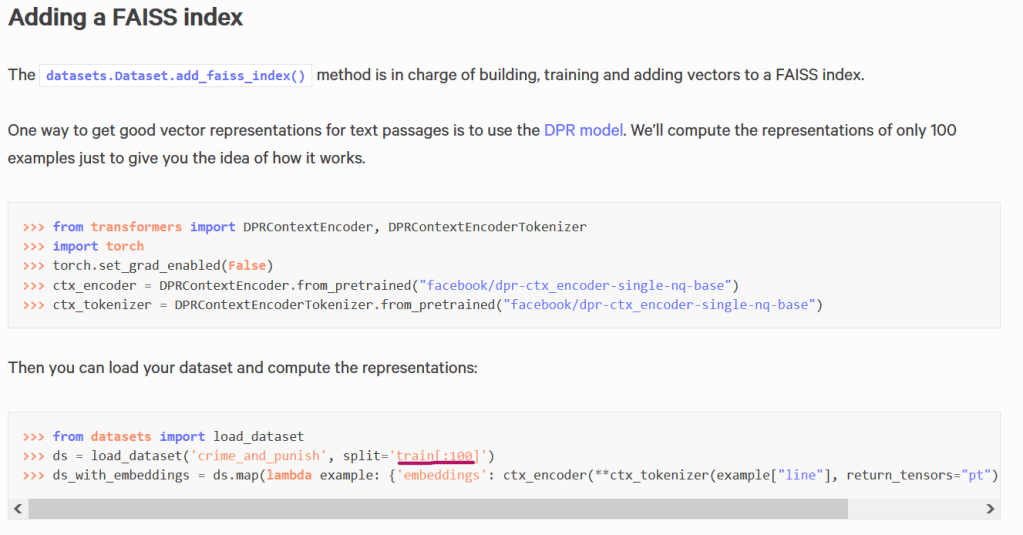

And then I randomly came across this page on my search where I saw a weird ‘split notation’ that I hadn’t seen before.

I didn’t find anything like this in the main dataset documentation (here). I found it randomly on an ‘indexing a dataset’ documentation (here).

now with 100 rows, or at least and idea of how to subset, I can train my model in a shorter amount of time and test out other tasks downstream.